DALL-E 3 vs Midjourney 2026: The Real Comparison

Quick takeaway

Choose DALL-E 3 if: You need precise prompt adherence, readable text in images, photorealistic people and products, or outputs that execute your specific creative direction accurately.

Choose Midjourney if: You want visually distinctive, artistically interpreted images, editorial and conceptual photography aesthetics, or consistent high-quality results with more creative latitude in how the prompt is interpreted.

DALL-E 3 and Midjourney are both capable AI image generators and both are available on Cliprise. They are not interchangeable. They make fundamentally different trade-offs in how they respond to prompts and what visual qualities they prioritize - and understanding those differences saves significant time in production.

For gallery-heavy or art-directed briefs, treat Cliprise as an AI art generator platform as well - Midjourney’s stylized lane lives next to DALL-E 3’s literal execution in the same launcher.

When the deliverable is packaging, UI mocks, or other literal stills, the same hub exposes Cliprise’s text-to-image workspace so you are not bouncing between separate literal vs. stylized tools.

This comparison covers the dimensions that actually matter in practice: prompt adherence, artistic style, photorealism, text rendering, and specific use case performance. Both models were tested across the same prompts on Cliprise.

How They Are Different at the Foundation

DALL-E 3 - Instruction Execution

DALL-E 3 is built around faithful execution of what you describe. If your prompt specifies a composition, a lighting setup, a color palette, specific included elements, or a precise visual arrangement, DALL-E 3 attempts to deliver exactly that.

This makes it predictable. You describe a scene; you get that scene. You specify that the subject should be in the lower third of the frame with negative space above; DALL-E 3 follows that instruction. You ask for a specific color temperature and lighting direction; the output reflects it.

The trade-off: the faithfulness comes with less spontaneous creativity. DALL-E 3 executes; it does not add artistic interpretation that wasn't explicitly prompted.

Midjourney - Artistic Interpretation

Midjourney interprets prompts with significant creative latitude. It produces images that feel like they were made by someone with a strong visual sensibility - images that are often more interesting than a literal execution of the prompt because Midjourney makes aesthetic decisions alongside following your instructions.

The trade-off: Midjourney's interpretive approach means it may not deliver exactly what you specified. If you need precise compositional control or specific included elements, Midjourney may make creative choices you didn't ask for. If you want images that look visually distinctive and art-directed, Midjourney's latitude is an asset.

Prompt Adherence: Who Follows Instructions Better

Winner: DALL-E 3, clearly.

Test the same compositional instruction on both models:

Prompt: "Product shot of a glass bottle on the left side of the frame, looking at it from slightly above, clean white marble surface, dramatic side lighting from the right, significant negative space on the right side for text placement"

DALL-E 3 executes this reliably. The bottle is on the left, the lighting comes from the right, the negative space is present. What you described is what you get.

Midjourney produces a beautiful image of a bottle - but the composition may shift, the lighting direction may differ, and the negative space may or may not be there. It decides the best-looking image, not the most exactly specified one.

When this matters: E-commerce product photography, social media templates where layout is fixed, marketing assets where specific compositions are required, thumbnails where placement is part of the design. In all of these, DALL-E 3's literal adherence is a significant practical advantage.

When it doesn't matter: Creative exploration, conceptual imagery, editorial photography where you want the model to make interesting choices.

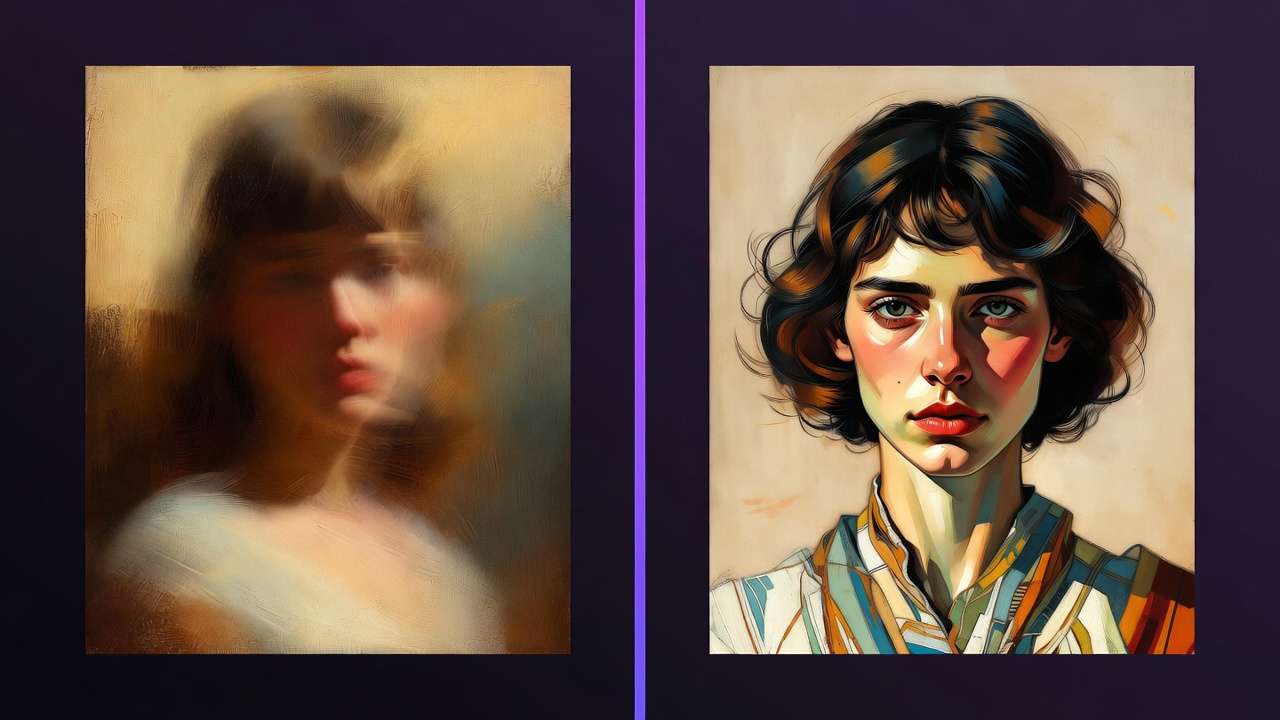

Artistic Style: Visual Quality and Aesthetic

Winner: Midjourney, in most creative contexts.

This is where Midjourney leads clearly. Its default outputs have a visual distinctiveness - a sense of careful composition, interesting light, and considered aesthetic choices - that DALL-E 3 does not match by default.

Midjourney images often look like they were shot by a photographer with a strong point of view. Editorial portraits, atmospheric landscapes, fashion imagery, conceptual illustrations - Midjourney produces these with a visual confidence that is immediately apparent.

DALL-E 3 produces technically accurate, clean, and often attractive images. But they tend toward a more neutral aesthetic - well-executed rather than visually distinctive.

The key distinction: DALL-E 3's neutrality is a feature when you want your direction to come through without the model adding its own personality. Midjourney's aesthetic is a feature when the image itself needs to carry visual interest.

When DALL-E 3 wins on style: Clinical product photography, technical illustrations, images that need to look "real" rather than artistic, contexts where the model's aesthetic personality would be distracting.

When Midjourney wins on style: Editorial and fashion photography, atmospheric and conceptual imagery, illustration-forward content, any context where visual distinctiveness is the goal.

Photorealism: Which Looks More Like a Real Photo

Winner: DALL-E 3 for people and products. Midjourney for environments and scenes.

For portraits and people, DALL-E 3 produces more consistently realistic skin texture, natural expressions, and anatomically accurate hands. Midjourney's portraits often look beautiful but slightly stylized - more like a very good illustration or a heavily retouched photo than a raw photograph.

For product photography, DALL-E 3's material rendering is strong - fabric, glass, metal, ceramic all read convincingly as real objects. The neutrality of its style works in its favor here.

For environmental scenes - landscapes, architectural interiors, atmospheric settings - Midjourney's interpretive approach produces environments that feel richly real in an experiential sense, even if not strictly photographic. An overcast autumn forest from Midjourney has atmosphere that DALL-E 3 often doesn't match.

Practical takeaway: For product pages, team photos, and press images where photographic realism is the goal, DALL-E 3. For mood boards, atmospheric background images, and environments where the feeling of a place matters more than forensic accuracy, Midjourney.

Text in Images: A Significant Difference

Winner: DALL-E 3, by a wide margin.

DALL-E 3 can render short text phrases, labels, signs, and captions in images with reasonable accuracy. Words come out legible. This makes it usable for:

- Product mockups with visible brand names

- Marketing images with readable taglines

- Thumbnails with text elements baked in

- Social media graphics with short text

Midjourney struggles with text. Words are often garbled, letters misrendered, or text distorted into visual noise that looks like writing but isn't readable. For any image where text legibility matters, Midjourney is not a practical choice.

Note: If you need Midjourney-quality aesthetics with reliable text rendering, Ideogram v3 is specifically designed for text-in-image generation and outperforms both models on this dimension. See Ideogram v3 vs Midjourney Text Rendering →

Side-by-Side: Use Case Routing

| Use case | Better choice | Why |

|---|---|---|

| E-commerce product on white background | DALL-E 3 | Precise execution, accurate materials |

| Editorial fashion photography | Midjourney | Visual distinctiveness, aesthetic quality |

| LinkedIn headshots / team photos | DALL-E 3 | Photorealistic people, accurate skin |

| Atmospheric landscapes / mood boards | Midjourney | Environmental depth, artistic interpretation |

| Product mockups with text labels | DALL-E 3 | Readable text rendering |

| Conceptual / artistic illustration | Midjourney | Creative interpretation, visual confidence |

| Marketing template with fixed composition | DALL-E 3 | Precise compositional adherence |

| Album art / music imagery | Midjourney | Visual energy and distinctiveness |

| Food photography | DALL-E 3 | Material accuracy, clean execution |

| Book covers / poster art | Midjourney | Compositional artistry, aesthetic range |

| Social media graphics with text | DALL-E 3 | Text legibility + clean execution |

| Social media imagery without text | Either, test both | Depends on brand aesthetic |

Prompt Style Differences

Because the models interpret prompts differently, the most effective prompting approach differs between them.

DALL-E 3: Be Specific and Compositional

DALL-E 3 responds well to specific, descriptive prompts that include compositional direction, technical photography language, and explicit element specifications.

Product photograph of a ceramic espresso cup,

matte white finish, shot from slightly above at a 45-degree angle,

on a warm grey concrete surface,

dramatic directional lighting from the upper left,

shallow depth of field, bokeh background,

professional food photography style

Include: composition instructions, lighting direction, camera angle, specific included elements.

Midjourney: Tone and Reference, Less Literal Direction

Midjourney responds better to atmospheric descriptions and stylistic references than to literal compositional instructions.

Espresso cup at dawn, warm winter morning,

condensation on ceramic, steam rising,

cafe window light, intimate and quiet,

magazine editorial photography

Include: mood, atmosphere, time/light quality, stylistic references. Let Midjourney make the compositional decisions.

For detailed prompting technique for both models, see AI Prompt Engineering 2026 → and Perfect Prompts →.

Consistency Across a Series

If you need multiple images that look like they came from the same shoot - consistent lighting, consistent aesthetic, consistent character - both models support seed values for reproducibility.

In Cliprise, note the seed from a generation you like and use the same seed with modified prompts to maintain visual consistency across multiple images in a series.

DALL-E 3's consistency advantage: Because it follows compositional instructions precisely, a consistent prompt structure produces consistently similar outputs. You can maintain brand look through systematic prompting.

Midjourney's consistency approach: Lock seeds for character consistency. Use consistent style reference descriptions to maintain aesthetic continuity.

See Seeds & Consistency → for the complete approach.

Other Image Models Worth Considering

DALL-E 3 and Midjourney are not the only strong image models on Cliprise. Depending on your use case:

Flux 2 - for photorealistic image generation, particularly portraits and commercial photography, Flux 2 often outperforms both DALL-E 3 and Midjourney. See Flux 2 Pro vs Midjourney → and Flux 2 vs Midjourney vs Imagen 4 →.

Google Imagen 4 - strong color accuracy and photorealistic rendering. See Midjourney vs Google Imagen 4 →.

Ideogram v3 - if text in images is important, Ideogram v3 is specifically designed for this and outperforms both models. See Ideogram v3 vs Midjourney Text Rendering →.

Nano Banana 2 - for character consistency across a multi-image series, Nano Banana 2's character system offers capabilities that DALL-E 3 and Midjourney don't match directly.

The practical advantage of Cliprise is access to all of these models under one subscription - you can test a prompt across multiple models and route each production task to the best tool for it.

The Honest Summary

DALL-E 3 and Midjourney make different bets. DALL-E 3 bets that you know what you want and will tell it precisely - and that faithful execution is the goal. Midjourney bets that a skilled creative interpretation of your prompt will produce something better than a literal rendering.

Both bets are right in different situations.

For production work where specifications matter - e-commerce, marketing templates, press images, anything with fixed compositional requirements - DALL-E 3 is the more reliable tool.

For creative work where visual quality and distinctiveness matter more than precise execution - editorial, artistic, mood imagery, conceptual photography - Midjourney produces better results more consistently.

Most serious image production workflows use both, routing different content types to the appropriate model.

Note

Both DALL-E 3 and Midjourney are on Cliprise. Test the same prompt on both models and see which fits your workflow - no separate subscriptions needed. Compare All Models →

Related Articles

Other image model comparisons:

- Flux 2 Pro vs Midjourney: Photorealism →

- Flux 2 vs Midjourney vs Google Imagen 4 →

- Midjourney vs Google Imagen 4: Style Comparison →

- Ideogram v3 vs Midjourney: Text Rendering →

- Best AI Image Generator 2026: Tested and Ranked →

- Seedream vs Midjourney: Budget Showdown →

Alternatives to Midjourney:

- Top 5 Midjourney Alternatives 2026 →

- Best Midjourney Alternative 2026 →

- Cliprise vs Midjourney: One Model vs 47 →

Prompting for image generation:

- AI Prompt Engineering 2026 →

- Perfect Prompts: Cinematic AI Scenes →

- Negative Prompts Guide →

- Seeds & Consistency →

Image generation guides:

- AI Image Generation 2026: Complete Guide →

- Midjourney on Cliprise: Integration Guide →

- Photorealistic AI Image Models: Workflow Guide →

Models on Cliprise: